Sharingan

Real-time gaze following

RUN

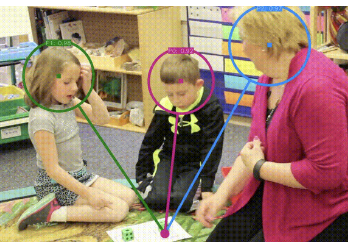

Sharingan is a gaze-following model built on a Vision Transformer (ViT-12) encoder with a conditional DPT decoder. Unlike gaze estimation tools that predict where someone's eyes are pointing in degrees, Sharingan predicts the gaze target directly in the scene — the pixel location that a person is looking at.

The model takes a full scene image and one or more head crops, then outputs a 64×64 gaze heatmap per person and an in/out-of-frame probability. In this web implementation, up to 3 people share a single ViT forward pass (N=3 batch), so the backbone runs once regardless of how many people are in the frame.

Face detection uses MediaPipe BlazeFace (short-range model). The ONNX model runs entirely in the browser via ONNX Runtime Web with WebGPU acceleration. A discrete or integrated GPU is required; WASM fallback is not supported due to speed constraints.

The tool supports both live webcam and uploaded video sources. In video mode the file is processed frame-by-frame so every frame receives a full inference pass.

Output columns

Each recorded row corresponds to one person in one frame. Beyond the basic face and gaze coordinates, the CSV includes gaze vector metrics, heatmap uncertainty metrics, and pairwise Joint Visual Attention (JVA) scores.

| Column | Description |

|---|---|

frame | Frame index (0-based) |

timestamp | Video timestamp in seconds |

face_index | Person index within the frame (0–2) |

face_x1 | Face bounding box — left edge (pixels) |

face_y1 | Face bounding box — top edge (pixels) |

face_x2 | Face bounding box — right edge (pixels) |

face_y2 | Face bounding box — bottom edge (pixels) |

gaze_x | Predicted gaze target — x (pixels) |

gaze_y | Predicted gaze target — y (pixels) |

inout_prob | Probability that gaze target is in-frame (0–1) |

| Gaze vector | |

gaze_vx | Unit gaze direction — x component (face center → gaze target) |

gaze_vy | Unit gaze direction — y component |

gaze_angle_deg | Gaze angle in degrees (atan2; 0° = right, 90° = down) |

gaze_dist_px | Pixel distance from face center to predicted gaze target |

| Heatmap metrics | |

hm_peak | Peak probability of the softmax heatmap — higher means more confident gaze target |

hm_entropy | Normalised Shannon entropy of the heatmap (0 = perfectly certain, 1 = uniform) |

hm_spread_px | Weighted spatial standard deviation of the heatmap in pixels (RMS of x and y) |

| Joint Visual Attention (JVA) | |

jva_partner_idx | Face index of the best JVA partner in the frame (−1 if only one person detected) |

jva_gaze_dist_px | Pixel distance between this person's gaze target and their partner's gaze target |

jva_dir_sim | Cosine similarity of the two gaze direction vectors (−1 = opposite, +1 = identical) |

jva_hm_overlap | Bhattacharyya coefficient between the two softmax heatmaps (0 = no overlap, 1 = identical) |

jva_score | Combined JVA score averaging gaze proximity, direction similarity, and heatmap overlap (0–1) |

tag | User-defined label from the recording bar |

Metrics reference

Gaze vector

Derived from the straight line between the face-bounding-box center and the argmax of the

predicted heatmap. gaze_angle_deg follows standard atan2 convention

(0° = rightward, angles increase clockwise). gaze_dist_px is a proxy for

how far the person is looking relative to their own position in the frame.

Heatmap uncertainty

The raw 64×64 model output is converted to a softmax probability distribution before

computing metrics. hm_peak and hm_entropy measure prediction

confidence from complementary angles — a high-entropy, low-peak heatmap indicates the

model is uncertain about where the person is looking. hm_spread_px captures

the spatial extent of the probable gaze region.

Joint Visual Attention

JVA metrics are computed pairwise: each person is matched to the partner whose

jva_score is highest. The score combines three cues — spatial proximity of

gaze targets (falls to 0 at ~25% of frame diagonal), cosine similarity of gaze directions,

and the Bhattacharyya coefficient between heatmaps. A jva_score near 1

indicates two people are very likely attending to the same location.